The San Francisco Consensus

Last week at the [un]prompted conference in San Francisco, the question on everyone's mind was: What happens when Time-to-Exploit reaches zero?

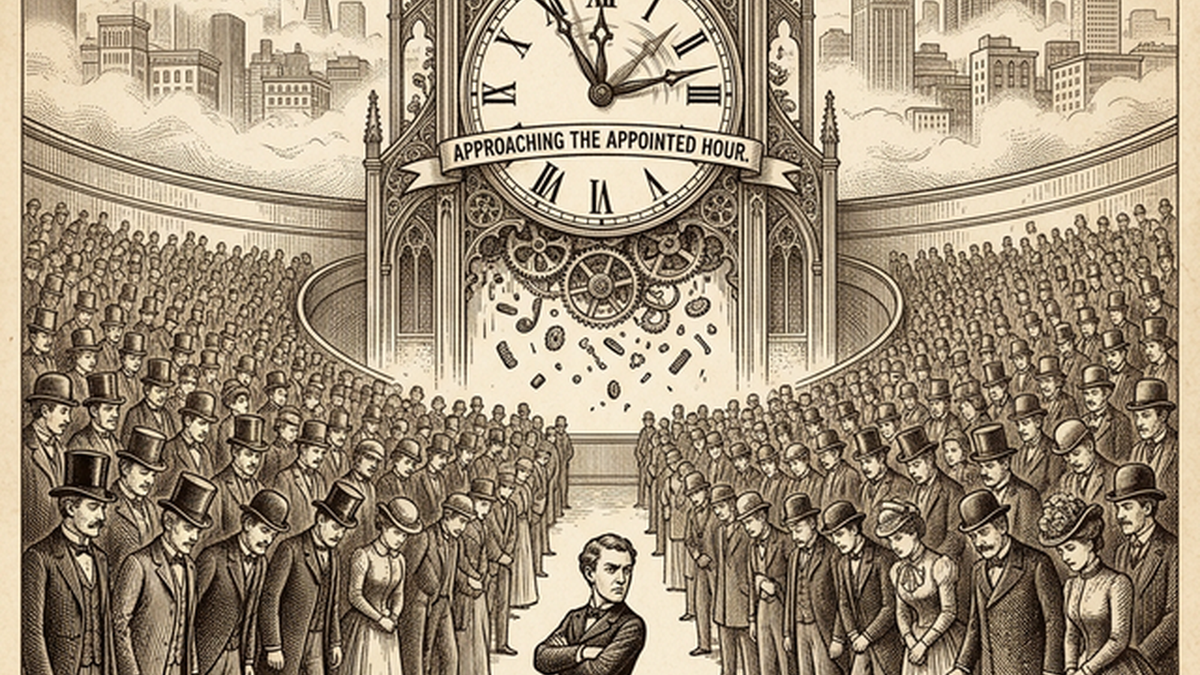

The Problem, As Told By The Clock

The Zero Day clock project shows a single, collapsing trendline. In 2018, the median time between a vulnerability being publicly disclosed and the first observed exploit was 771 days. Defenders had over two years to patch. By 2025, that window had compressed to under a week. It is predicted now that within this year, 2026, disclosed CVEs will be exploited within hours of their release. Meanwhile, even the most efficient vulnerability management programs often require weeks to test and deploy that same patch. The act of fixing a vulnerability is now slower than the time it takes to exploit it. This is the zero-day apocalypse.

The San Francisco Consensus

And so a consensus has formed.

- AI-driven vulnerability discovery is exponential

- Exploit generation will become effectively free

- The disclosure model is broken beyond repair

- The Internet and technology is about to come under constant, undefendable attack

I call it the San Francisco Consensus and have some healthy critiques, not because it's wrong on the facts, but the conclusion is misplaced. The data is real. The trendlines are real. But the conclusions people are drawing from them have a suspiciously uniform shape, and they skip over some inconvenient observations. San Francisco is always cosplaying a future — whether it's crypto, scooters, or Waymo. But they forget they're rehearsing one possible outcome, not the inevitable one.

My Skepticism

Someone has to make the argument for why the trendline on the Zero Day Clock won't result in the end of the world. Might as well be me.

Here's what the consensus misses:

There are still a finite number of threat actors, and they still have motivations. This sounds obvious, but it matters. The idea that attacks will exponentially increase as the discoverability of zero-days goes to zero ignores a basic fact: the supply of exploitable vulnerabilities has never been the bottleneck. The bottleneck is the number of actors with the intent, infrastructure, and operational capacity to use them. Making exploit generation cheaper doesn't automatically manufacture more adversaries with reasons to attack your organization.

Availability doesn't always increase usage. The cannabis legalization experiment in the US provides us lessons about the zero-day apocalypse. When states began legalizing marijuana, opponents predicted societal collapse, at a minimum, increased usage and skyrocketing addiction. What actually happened? Usage rates barely changed. The sky didn't fall. The predicted catastrophe was a projection of theoretical availability onto actual human behavior, and it was wrong. The zero-day apocalypse makes the same category of error: assuming that because something becomes possible, it will happen at scale.

The definition of "security vulnerability" in these calculations is very narrow. The Zero Day Clock measures CVEs, traditional software bugs with a disclosure lifecycle. But the vast majority of breaches don't start with a novel zero-day. They start with misconfigurations, credential theft, social engineering, and the boring, inglorious fundamentals we've been failing at for decades. Focusing exclusively on the exploit timeline creates a dramatic narrative, but it may not be the threat model that actually matters for most organizations.

We've never actually been "winning." The framing of the zero-day apocalypse implies we're about to lose something we currently have, some secure state of our systems that existed before AI. But we've never had that. What we have is an agreed-upon stasis: a managed level of insecurity that allows the world to keep functioning, something attackers and defenders are invested in. The zero-day apocalypse isn't the end of secure systems, but it might be the start of pretending we had them.

The Risk of Consensus

There is a lot of groupthink happening right now on the coasts, among technologists who, for better or worse, are enamored with the whiz-bangs of their own creations. I don't trust those deepest within the technology to extract themselves and provide clear guidance on broader societal implications. The people building the AI are not the right people to tell us what it means.

Perhaps the real AI-driven problem on the rise isn't the zero-day apocalypse at all. Perhaps it's something quieter: self-inflicted availability issues, embedded recursive complexity, systems so intertwined with AI-generated code that no human understands them anymore. Whatever it is, if the the apocalypse comes it probably won't look like the one San Francisco is rehearsing for.

Kat Traxler attended the [un]prompted conference in San Francisco, March 2026.